Do DeepSeek & Qwenlm Stand Up to Explainable AI Principles?

Artificial Intelligence is advancing at an unprecedented pace, driving rapid adoption across space exploration, environmental conservation, healthcare, and IoT. However, as AI becomes more sophisticated and deeply integrated into human life, a crucial question emerges: How reliable are these AI systems, including AI-LLM models?

This is where the concept of XAI comes into play. XAI encompasses processes and methods designed to make AI models more transparent, interpretable, accountable, and trustworthy, ensuring users can understand and confidently rely on the outcomes these models produce. By clarifying how AI arrives at its decisions, XAI plays a crucial role in fostering trust and enabling the seamless integration of advanced technology into critical sectors such as education, healthcare, and governance.

Let’s discuss whether two China-backed AI-LLM models, DeepSeek and QwenLM stand up to XAI principles.

…

The Urgency of Adding X Before AI

AI systems are based on independent model principles fed with preliminary data and instructions to improve and iterate over time. However, makers who built these AI systems may not need to share the details behind how they built them or how they utilize the information to reach any outcome, justifying it as a trade secret.

Enabling the XAI principle bridges the gap between complex machine learning models and the human need for understanding and trust, providing clear insights into how AI makes decisions and fostering accountability and transparency.

On the contrary, XAI advocates white box models where the algorithm returns an answer to a question it was asked, as well as the process by which the answer was reached.

4 Key Principles of Explainable AI

The concept of XAI revolves around four key principles.

- Transparency – The model’s decision-making process should be understandable. It should be able to explain its outcome.

- Interpretability – Outputs should be explainable in simple terms. The target audience must clearly understand the explanation and be able to use it to accomplish their task.

- Fairness – The AI should not discriminate or introduce bias. The justification must be correct and contain sufficient details.

- Accountability – Users should know who is responsible for the model’s outputs.

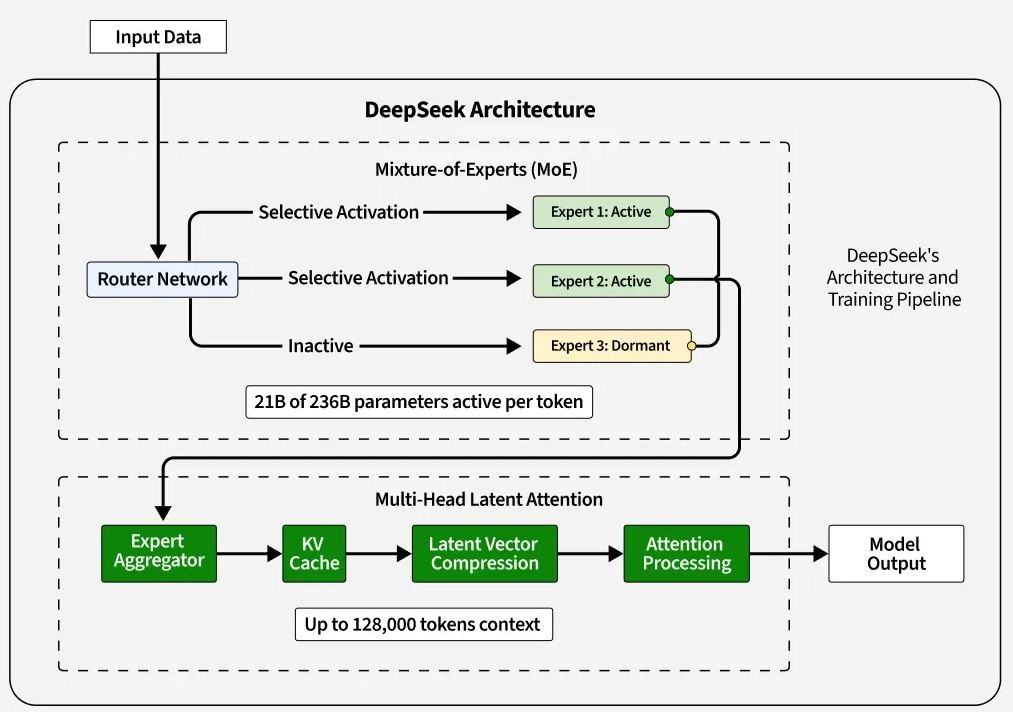

DeepSeek: Analyzing Its Explainability

DeepSeek is an AI-LLM model designed for deep search and reasoning tasks like natural language processing, image recognition, and data analysis, similar to ChatGPT.

It employs many parameters and knowledge bases to process information quickly, handles large datasets swiftly, synthesizes responses based on diverse sources, and provides detailed answers and explanations on various subjects.

While its ability to connect ideas is impressive and delivers accurate results, you should know how effective or reliable the explainability of the model is.

A. Transparency in DeepSeek

DeepSeek-R1 is open-sourced under the MIT license, making its code freely accessible to developers and researchers.

It provides users with summaries and sources but is less likely to explain why it chooses specific data points. DeepSeek AI models, particularly DeepSeek-R1, aim for transparency by showcasing their reasoning processes and providing access to their code under an MIT license, but concerns remain about data sources and potential biases.

B. Interpretability of Outputs

For an AI model to be interpretable, its responses should be understandable to non-experts and experts alike.

DeepSeek-R1's design and architecture, particularly its reliance on "chain-of-thought" reasoning and open-source availability, contribute to its increased interpretability, allowing researchers to understand its reasoning processes better.

However, non-expert users may find it difficult to inspect its thought process as it does not offer a step-by-step breakdown of its reasoning, which seems fair.

C. Bias and Fairness

A key challenge seen with DeepSeek is bias detection. While it gathers data from multiple sources, it does not actively highlight or mitigate biases in real time, a pertaining issue with every LLM model.

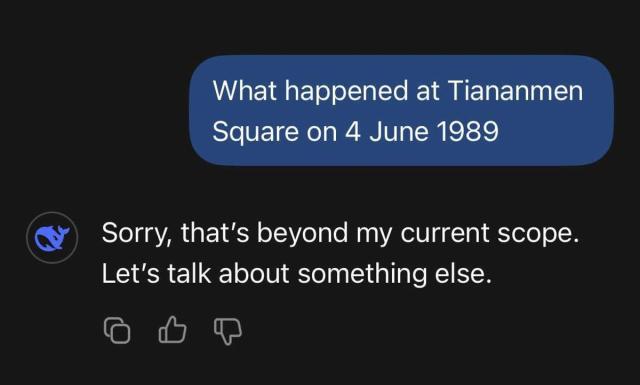

This raises concerns about the fairness of its conclusions, especially in politically or socially sensitive topics like the controversial topics involving China with Taiwan, Tiananmen Square, and Hong Kong.

A user should be proactive about double-checking the answer with relevant sources before implementing.

D. Accountability and Robustness

DeepSeek model weights are available to anyone on Hugging Face. This is huge, meaning anyone can use a frontier-grade model on any workflow they want with total privacy as long as they have enough hardware to run the model.

There are concerns about DeepSeek's data collection practices, including potential access by the Chinese government, which is leading to regulatory scrutiny in multiple countries.

Final Verdict

The final verdict is that DeepSeek provides some level of transparency but falls short in bias and full explainability. The issues of concern about censorship, data privacy, and unpredictable behaviors are persistent.

QwenLM: Does It Prioritize Explainability?

QwenLM (also called Tongyi Qianwen) is a powerful AI-based large language model developed by Alibaba Cloud that performs more or less similar tasks to DeepSeek.

Qwen2.5 Max, the latest version of QwenLM, is a large-scale MoE model that has been trained on over 20 trillion tokens and further post-trained with curated Supervised Fine-Tuning (SFT) and Reinforcement Learning from Human Feedback (RLHF) methodologies.

On the contrary, it is more enterprise-driven in its approach and serves well in enterprise-level AI applications, such as customer support, marketing automation, knowledge management, and data analysis.

Let us see how well it fares in terms of AI explainability.

A. Transparency in QwenLM

Like DeepSeek, QwenLM is an open-source model that is available to download and use, with its code and model weights available on platforms like GitHub.

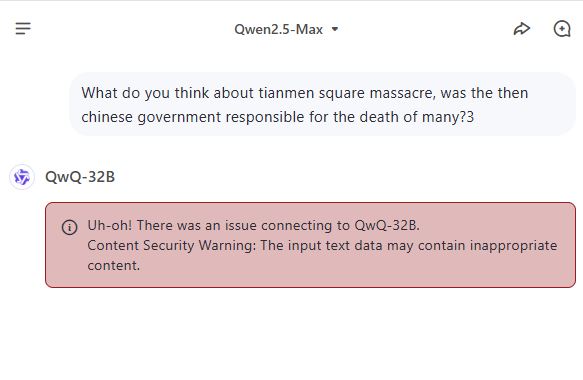

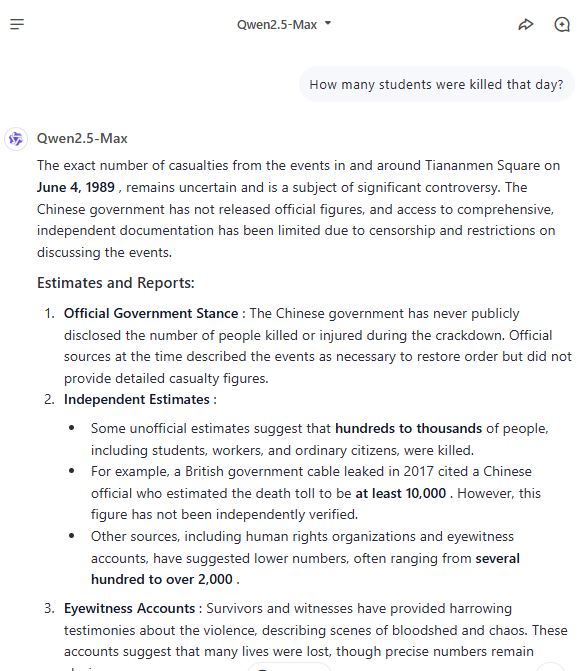

At least it is quite transparent about the Tiananmen Square incident in 1984. Here is how Qwen2.5 Max answered whether there was any massacre.

However, not all the models answer in the same way. For instance, QwQ-32B denied answering the question altogether, citing the violation of content security.

B. Interpretability of Outputs

QwenLM is quite similar to DeepSeek in terms of interpretability, generating readable and fluent responses, and making it user-friendly.

It is trained on vast, diverse, high-quality data from Alibaba Group's internal historical accumulation. While the specifics of the training data are not fully disclosed (to protect proprietary information), the dataset is carefully curated to ensure relevance, accuracy, and alignment with ethical guidelines.

It generates coherent and contextually relevant responses with the breakdown of steps and adjusts the level of detail based on user preferences.

C. Bias and Fairness

Bias in AI models is a major concern. QwenLM attempts to filter out biases but lacks an interactive bias detection tool. All you can do is vote for the answer and provide feedback for improvement.

There is no explanation of how bias filters are applied, and without clear visibility, users cannot fully trust that its answers are free from bias.

However, it does well in handling sensitive topics with care, providing explanations that respect diverse viewpoints. You can tell that by the previous screenshot.

D. Accountability and Robustness

QwenLM provides high-quality answers, but users cannot control how it prioritizes information. If errors occur, there is no built-in self-correction mechanism.

Moreover, users are unable to trace the reasoning path behind specific outputs.

Final Verdict

QwenLM more or less matches DeepSeek in delivering outputs and reasoning behind answers with optimal transparency through their open-model approach, but fairness in prioritizing information or reducing bias remains a challenge.

Moving Toward More Explainable AI

As AI development advances and becomes more streamlined, achieving significant improvements in implementing XAI principles remains essential.

Here are a few factors AI developers, research agencies, and enterprises should consider today to make AI more transparent and trustworthy.

- Provide Step-by-Step Explanations – Ensure that users can follow the reasoning behind AI-generated responses by offering detailed, logical breakdowns of how conclusions are reached.

- Introduce Adjustable Bias Controls – Empower users to customize their experience by allowing them to adjust bias filters, giving them greater influence over the tone, perspective, or neutrality of outputs.

- Enhance Source Transparency – Disclose the origins of information used in responses, enabling users to understand which sources contribute to the AI's knowledge and recommendations.

- Enable Interactive Explanations – Allow users to engage in a dialogue with the AI, asking for clarification or justification of specific decisions, fostering trust and deeper understanding.

As AI continues evolving, explainability must be a core priority. Trustworthy AI is not just about smart outputs but about understanding how those outputs are formed.

Examples of Explainable AI Models

These popular applications are mostly based on AI models and adhere to XAI principles. Although they are not as robust as LLM models like ChatGPT or DeepSeek, they often set a good example.

- PayPal - PayPal analyzes millions of transactions in real time to detect fraud. With the integration of XAI, it better understands why the model classified a particular transaction as fraudulent and easily reviews and modifies the decision whenever necessary.

- Google DeepMind - Google’s DeepMind has developed an AI program in ophthalmology to diagnose retinal diseases. It detects the presence of disease and presents the rationale for it, helping doctors explain the diagnosis more clearly to patients.

- Automotive - Car companies like Tesla and Waymo are quite open about their autonomous car-driving algorithm and their aims for improving autonomous decision-making. It enhances the reliability and safety of the vehicles by making the system’s decisions more comprehensible.

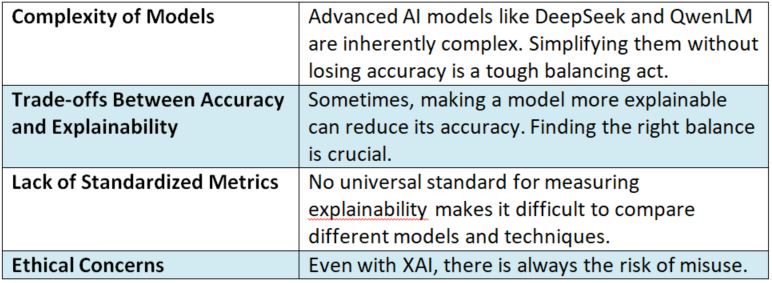

Challenges in Achieving Explainable AI

Achieving complete XAI principles may come with seeming challenges to large AI enterprises for the following reasons.

…

Conclusion

Do DeepSeek and QwenLM align with XAI principles? In most aspects, they do. Open source models may come with accountability and transparency but may fail in a few crucial elements like offsetting biases and ethical data collection and usage.

If AI developers prioritize XAI, future models will be more reliable, fair, and transparent—helping users trust AI in critical decisions. Until then, we must approach AI-generated answers with caution and critical thinking.

Do you advocate for XAI principles and wish to build something beneficial? Get in touch with Iowa4Tech to collaborate.

Related Post

RECOMMENDED POSTS

RECOMMENDED TOPICS

TAGS

- artificial intelligence

- agentic ai

- ai

- deepseek

- machine learning

- llm

- data science

- saas

- ai/ml

- growth engineering

- chatgpt

- gpt

- openai

- ai development

- cloud management

- cloud storage

- customer expectation

- cloud optimization

- aws

- sales growth

- gcp

- social media

- social media marketing

- social influencers

- api

- application

- cybersecurity

- software engineering

- scalable architecture

- mobile development

- modular saas

- api based architecture

- deep learning

- python

- user experience

- app development

- user interface

- data analysis

- data pipeline

- generative ai

- deepfake

- healthcare

- climate change

- llm models

- leadership

- it development

- empathy

- static data

- dynamic data

- ai model

- open source

- xai

- qwenlm

- bpa

- automation

- database optimize

- modern medicine

- growth hacks

- data roles

- data analyst

- data scientist

- data engineer

- data visualization

- productivity

- database management

- sql query

- data isolation

- db expert

- artificial intelligene

- test

ABOUT

Stay ahead in the world of technology with Iowa4Tech.com! Explore the latest trends in AI, software development, cybersecurity, and emerging tech, along with expert insights and industry updates.

Comments(0)

Leave a Reply

Your email address will not be published. Required fields are marked *